Overview

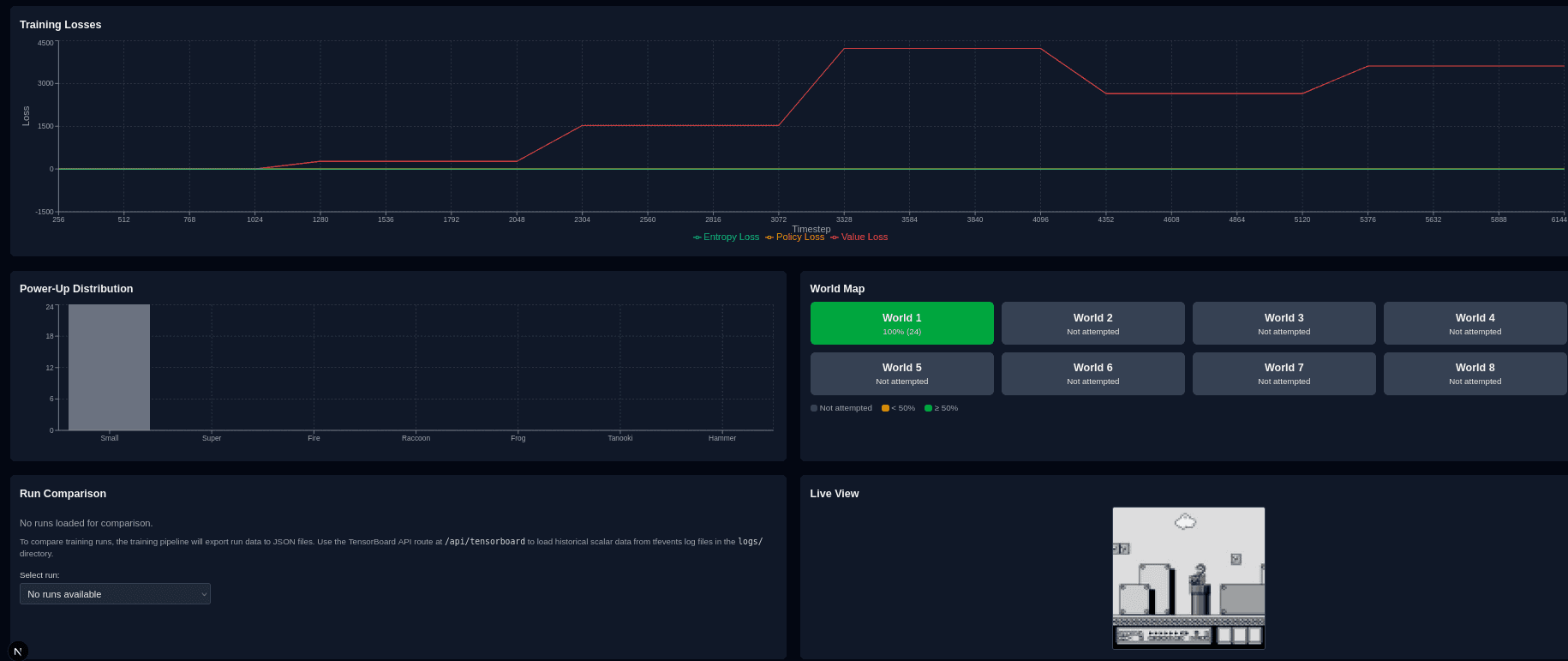

An in-progress machine learning project training an AI agent to complete levels of Super Mario Bros 3 using Proximal Policy Optimization (PPO). The agent learns from raw pixel observations through a convolutional neural network, with curriculum learning that progressively unlocks harder worlds as performance thresholds are met.

Key Features

- ▸PPO (Proximal Policy Optimization) via Stable Baselines3

- ▸CnnPolicy with raw pixel observations (84x84 grayscale, 4-frame stack)

- ▸Curriculum learning with 9 progressive phases across all worlds

- ▸Custom reward shaping (rightward progress, powerups, deaths)

- ▸SubprocVecEnv for parallel environment rollouts

- ▸NES emulation via gym-retro

Project Goals

Train a reinforcement learning agent to complete levels of Super Mario Bros 3 using modern RL techniques. The project explores reward shaping strategies, curriculum learning approaches, and the challenges of learning from raw pixel observations in a complex game environment.

Technical Approach

The agent uses Proximal Policy Optimization (PPO) with a convolutional neural network policy (CnnPolicy) that processes raw pixel observations — 84x84 grayscale frames stacked 4-deep to capture motion. Training runs across multiple parallel environments using SubprocVecEnv for efficient rollout collection.

A curriculum learning strategy progressively increases difficulty across 9 phases. The agent starts with just World 1-1 and unlocks additional worlds as mean reward thresholds are met (e.g., 500 reward + 500K timesteps to unlock World 1, then 400 reward + 1M to unlock World 2). A custom RewardCalculator module shapes rewards based on rightward progress, powerup collection, and death penalties.

Why It's Notable

Demonstrates core ML/RL fundamentals: environment design, reward engineering, policy optimization, and curriculum learning in a universally understood problem domain.

The curriculum learning approach mirrors real-world ML training strategies for complex tasks — starting simple and progressively increasing difficulty as the model improves.

Highly visual results that make for compelling demos, showing the agent evolve from random flailing to purposeful, skilled gameplay.

Current Status

This project is actively in development. The training pipeline, curriculum system, and reward shaping are implemented. Current focus is on optimizing hyperparameters and extending training runs to achieve consistent level completions across all worlds.

Technologies Used

Project Info

- Category

- Machine Learning

- Year

- 2025-2026

- Status

- In Progress